Some thoughts on Game Theory & Economics

Readers will probably be familiar already with the fundamental economic notions of supply and demand, of diminishing marginal utility/productivity, and so on. I'm hoping readers understand the mutual compatibility of free will and the "laws" of economics, also. John Ruskin, in Unto this Last (1862) complained that economics had no room for meritorious or selfless motives; there was no room for solidarity between employers and laborers, for example.

Among the delusions which at different periods have possessed themselves of the minds of large masses of the human race, perhaps the most curious—certainly the least creditable—is the modern soi-disant science of political economy, based on the idea that an advantageous code of social action may be determined irrespectively of the influence of social affection.However, Ruskin's understanding was imperfect: non-economic, or counter-economic, motives (such as emotional affinity for one's employees) are not incompatible with the concept of labor markets per se; rather, such motives tend to average out. For example, if someone boycotts Starbucks, others may just as likely develop "consumer loyalty" to the same firm, or a "social affection" to the staff of a particular franchise. Nor is Ruskin's objection convincing in regards to the labor market: if some firm out there, such as Old Fezziwig's, pays its workers more than it absolutely must to prevent their starvation or flight, then this reduces the number of persons Old Fezziwig may employ, thus pushing the general level of wages down by a very slight amount.

(There are other problems with classical economics, but Ruskin doesn't really know what they are.)

Economics needs to be understood not as a deterministic prediction of how persons will behave given the prices of stuff they like, but rather, as an explanation of the tradeoffs they make. Assuming a population has a reliable set of moral virtues, for instance (like a desire to avoid polluting the environment) at a reliable concentration, a decline in the price of gasoline will still result in an increase in gasoline consumption. That's because people, however much they may dislike consuming petrol, will find a dollar spent on it to provide more utility than it did before. And even if a few diehards are determined to buy absolutely positively no more than x liters of the stuff per week, no matter what, this means that enterprises using it will still be able to buy more; indeed, if a choice exists, they might increase, say, passengers transported, by leasing more cars as cabs or express vans.

However, at the level of business institutions (including, for example, labor unions, banks, and governing bodies in the national economy), economics can make few meaningful predictions. True, the average firm will (over the greatest possible time horizon) expand production until marginal costs equal marginal revenue; but there are many reasons why a particular firm, during a particular period of operation, will not. One reason is that the firm may be a specialty producer of things like ocean-going vessels or large buildings; its production levels may be controlled entirely by the local demand for a highly-specialized product. There is no meaningful demand curve for such a business, since, even if it could cut costs and prices by two-thirds, the industry for which it produces could only consume the same number of hydrocracker units or tower cranes.

Another reason is strategic-temporal. Most firms cannot just increase output by whatever amount they need to reach MC=MR. If you are the manager of a semiconductor fabrication firm, for example, you many want to increase output by 31.5%, but a new facility will increase output by 65%. If you stay where you are, marginal costs may be quite different from marginal revenues; but if if you get the new facility, you'll be working with an entirely new set of cost and revenue curves. Even if you have reached a point where the advantages of such an expansion are totally certain, you may wish to wait until the industrial union has negotiated a new contract before announcing the new plant.

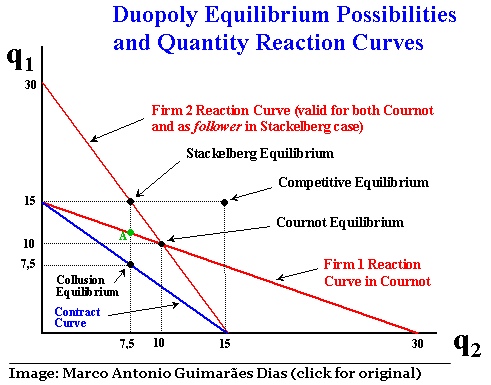

Other reasons—presumably the best-known reason—has to do with solutions of the duopoly problem. If you have only two big firms that sell a product, both will want to reach MC=MR; MR varies with the sales of the firm, so both duopolies and monopolies enjoy higher marginal revenue if sales are held artificially low. Unfortunately for the manager of the duopoly, the other firm wants you to reduce sales so it can reap the higher marginal revenue, while you will want to do the same.

A mathematician, Antoine Augustin Cournot (1801-1877), proposed that game theory offered the solution to what duopolies would sell. He used this to extrapolate the linkage between game theory (which supplied the optimal choices for individual actors) and economics (which anticipated the behavior of vast numbers of actors).

Game theory was an exotic concept for economists until the 1950's. In 1951, mathematician John Nash introduced the Nash Equilibrium (actually, the mathematical explication); then John Harsyani introduced ('58) the concept of Bayesian approaches to a Nash Equilibrium. The tremendous analytical opportunities opened by game theory and the digital computer transformed economics, as theorists sought to meld the study of individual behavior and the behavior of entire economies, to achieve something that had far more predictive power. By the 1960's, Carnegie Mellon University professor John Muth had introduced the concept of "rational expectations," which sought to represent the economy as a scalar multiple of average actors; this idea was examined in far greater detail by Edward Prescott, who also tried to restore neoclassical economics. In effect, the new economists of the 1970's expanded microeconomic analysis to cover the behavior of the entire national economy.

The evolution of game theory and economics continued to drive most research, ultimately superceding "rational expectations." Adherents of the new classical economics, after the mid-90's, tended to downplay "rational expectations" in favor of "stochasticity" and "dynamic general equilibrium" (DGE) analysis [*]. At the same time, the new Keynesian model has incorporated both DGE and stochasticity, although since it does not believe policy is ineffective, it retains the ideological rift with the likes of Prescott, et al. However, like the new classical models, there is an element of expectation. As it happens, the role of expectations applies to prices and wages, as well as to expectations of future interest rates and inflation, so the New Keynesians could retain the old concept of sticky prices (and hence, adjustment of the economy to equilibrium via quantities, rather than fluid prices).

WHO WINS THE "GAME"? NEW CLASSICAL? OR NEW KEYNESIAN?

("New classical" is an economic analysis that emerged after 1961 and became prevalent in the USA after 1978; "neoclassical" is a synthesis of "marginalism" and emerging understanding of monopoloid markets, etc.; it became prevalent from 1890 to 1936)

The impact of game theory on economics can push it in different directions. As understood by the likes of Oskar Morgenstern, Robert Barro, and Vernon Smith, game theory was employed to make the case that monetary policy would be thwarted by financial arbitrage, while social welfare programs tended to expose economically virtuous actors to blackmail by economically weak ones.

(The "economic loser" theory, in which a socially-optimal technology is blocked by an economic class whose rents are threatened by it, is discussed and refuted in this PDF file by Acemoglu & Robinson)

From the other direction, Matthew McCartey points out that the interconnectedness of behavior under game theory assures multiple equilibria—leaving the state with the obligation to ensure the one that prevails is one corresponding to social goals. Likewise, Anatol Rapoport, noted game theorist, repeatedly treated confrontation as an institution in and of itself, which guided the formation of confrontation-promoting institutions. Cooper & John, in "Coordinating Coordination Failures in Keynesian Models" (PDF-1988), which introduced the concept of "strategic complementarity" to explain involuntary unemployment. As McCartney notes, these theories place an extraordinary burden of rationality on the individual, as well as on the individuals' self-knowledge. In the face of life experience demonstrating that most individuals lack either rationality or the opportunity to make predictions that average to accuracy, both justify their assumptions by anticipating that some economic agent will identify arbitrage opportunities and capitalize on them.

But even the notion of arbitrage does not save the rational expectation. That's because a prediction only becomes an arbitrage opportunity when discovery (i.e., the event happening) proves the prediction true or false. An example is the commodity options market. We can assume the options market for crude oil captures all available information for predicting the spot price of oil on 1 June 2006. But when 6/01/06 rolls around, the inferred price (i.e., the price for which the 6-month option price-spread was most advantageous) will probably be wrong, and the fact that a lucky few will have got it right contributes nothing to technical efficiency in the oil industry. A more compelling example is the impact of Volcker's monetary supply growth targeting in 1980, which could easily have been foreseen, but was not.

However, game theory is valuable when we are trying to assess reaction to uncertainty. Moreover, as with economic theory generally, game theory is far more useful examining why institutions fail to achieve desired results. Both are effective as instruments of analysis; they are dubious as elements in positive science. Hence, the typical confusion of critics: physics allows people to make moon landings, while economics offers an ingenious tool for defending absolutely any course of action. Neither it nor game theory can be falsified by observation, whereas physics can be. The flaw in this reasoning is, of course, that while social sciences are necessary, they are quasi-legalistic: whereas a physicist or chemist is productive regardless of anyone else understanding physics or chemistry, an economist is useful only in a population of other economists. The same may be said of lawyers; a single lawyer, entrusted with unilateral power to make decisions, is not particularly likely to make good ones, and lawyers are constantly disagreeing with one another, yet (I speak in compete seriousness), a nation without lawyers is virtually ungovernable. Again, one requires a structure run by legally-trained people to administer law; but there is no such thing as a controlled experiment for ensuring a legal decision is correct.

The ability of an economist to supply useful information about system failure stems from the techniques of analysis and scrutiny that economics has developed; the ability to compare rival historical forces (such as, rising supply and rising demand for a good, which have contradictory effects on price) and establish which one is more important, the ability to render disimilar situations into comparable ones for purposes of relevant comparison, and the ability to use mathematical models to test assumptions for absurdity, are the implements that economics brings to the social sciences. Game theory does the same thing, albeit more starkly.

New-classical economists will not take well to my previous two paragraphs. A few might nod in agreement, then insist that economics is good at identifying costly frictions (such as rent control or minimum wage rates) that can prevent full employment; but beyond that, they'd have a hard time explaining why economics had anything to say after Jean-Baptiste Say and Frederic Bastiat died. The Keynesian, in contrast, would wonder where the IS-LM curve fits into "supply[ing] useful information about system failure." Economists observing the economic implosion of the late 1920's, from '37 onward largely agreed the failure of sustaining adequate demand was responsible for the calamity, and devised various proposals to defend future demand. I would observe that, when economists began conceiving social welfare programs to ensure minimal levels of demand and institutions to prevent global financial illiquidity, they were doing their jobs successfully. But when, having developed institutions to prevent fiscal and monetary failure, they assumed those institutions would always work, they made profound errors in their own field that led to another, albeit much smaller, economic crisis in the 1970's.

The crisis was caused by the economics furnishing, as they evidently surmised, an instrument to solve the problem of the business cycle. It was so powerful its use became addictive. Yet the economists largely overlook the dangers of overuse. They failed to see how the tool could be insidious. And when at last its restorative powers were exhausted, the entire profession simply recanted fiscal and monetary policy, en bloc. Since economists did not offer alternative tools to political leaders (who demanded them), the result was that quasi-automatic fiscal policy and discretionary monetary policy continued to operate.

Today game theory is moving towards offering a meta-theory, or theory about theories. The question is no longer, what is the most appropriate judgment in any particular scenario (from the perspective of an omnipotent authority), but rather, what determines how a decision will be made? Instead of announcing the optimal decision under uncertainty, there is more interest in understanding what sorts approaches will be used, and if they are strategically different. The new field of neuroeconomics seeks to adapt to utility theory without rationality. Such a version of economics, in which actors operate in a system/institution, constrained neurologically, and limited to strategic behavior under uncertainty, would leave us about as far from classical economics as it is possible to get.

UPDATE (27 January 2006): Luka Crnic (Cherishing the Mundane) posts on the "hot topic" of neuro-economics. He begins with an article in The Economist:

No longer will economists rely on crude statistical models of how people behave in response to a policy change, such as an interest-rate rise or a tax increase. Instead, they will be able to peer directly into the brain to predict behaviour.Here, neuro-economics is used as a research tool in "behavioral economics," i.e., the study of economic decisions made by individual actors. The original study of behavioral economics employed the familiar scheme of translating the researcher's individual hypothesis about economic behavior into a constrained optimization problem, then "modeling" economic events as if the nation consisted of 300 million identical humans. It was obviously a simplification, but it could be used to screen out categorically absurd scenarios. It was Quasi-Rational Economics, by Richard Thaler and Hersh Shefrin, and it introduced such compelling ideas as the split personality ("planner-doer"). Those interested in an introduction to behavioral economics can read "Behavioral Economics" (PDF) by Sendhil Mullainathan & Richard Thaler.

My impression is that further refinements in understanding of economic behavior at the personal level would only lead to a tweaking of the constant relative risk aversion component of utility maximization functions.

In "Social Neuroscience and Neuroeconomics," Crnic outlines some of the actual research in neuro-economics, which does indeed include fMRI scans of people playing a game.

First of all, what role does caudate nucleus play?The next day, in "The Consilience of Brain and Decision," Crnic reviews a paper of the same title; he outlines some of the practical principles, without spelling out how this might affect economic modeling or analysis. I really need to return to these posts and others of Crnic to understand them properly, especially since there are so many links I will need to read.

[Nature Neuroscience paper]: The human striatum has been implicated as a critical structure in trial-and-error feedback processing and reward learning. In particular, the caudate nucleus, a structure linked to learning and memory in both animals and humans has been shown to have a role in processing affective feedback with responses in this region varying according to properties such as valence and magnitude. It has been shown that activation in the human caudate nucleus is modulated as a function of trial-and-error learning with feedback.This clearly indicates that the perception of the moral character of the partner in the trust game directly influences the neural mechanisms connected with feedback processing in trial-and-error learning

(cross-posted at Hobson's Choice)

NOTES:

Rationality: strictly defined in economics:

- [Transitivity] If a decision maker prefers A to B and prefers B to C, then she should prefer A to C;

- [Completeness] A decision maker is rational if she can rank all bundles of goods. If two bundles have equal rank, then that is a valid rank and the decision maker is "indifferent" to them

ADDITIONAL READING: Marco Antonio Guimarães Dias, "Asymmetrical Duopoly under Uncertainty: the Extended Joaquin & Buttler Model," Pontifica Universidade Católica, Rio de Janeiro, Brazil

Labels: economics, game theory, general equilibrium, rational expectations, risk