Formats & Monopolies

Formats are technology that must be adopted by many to be useful to any; for example, VHS versus Betamax videocassette systems; SI versus imperial weights and measures; 97-octane gasoline versus all the other possible inflammable formulations of hydrocarbons; gauges of railroad track; 525-scanline television sets (USA) versus much higher image quality in the UK, EU, and Japan. In fact, virtually all mature industrial design is thoroughly dictated by format, even when the result is a far less efficient product. The Microsoft Windows operating system is a famous example; there are numerous OS's that are superior to Windows for many if not all applications, but the vast corpus of available software is written for Windows, Intel microchips used in most computers are designed for Windows, and in the USA, most workers are trained in Windows.

The presence of formats makes for discontinuous technological change; the adoption of a new format requires large monopoloid firms with a high degree of vertical integration or collaboration, and the new technology must (a) be significantly superior to the old, either in quality or in ease of supply, and (b) it must increase the demand for the category of product. To make my point, I'd like to get something off my chest about "Moore's Law".

Gordon Moore, an engineer at Fairchild who later founded all-mighty Intel, wrote "Cramming more components onto integrated circuits" (PDF, 1965) in which he described a trend in semiconductors:

For simple circuits, the cost per component is nearly inversely proportional to the number of components, the result of the equivalent piece of semiconductor in the equivalent packageIn other words, the optimal number of components per circuit would double every 14 months, while that same optimal cost per component would itself halve every 14 months. Of course, over time, clock speed increased while component cost fell, so computing power per constant dollar grew faster than that. Moore's law has continued to hold and is expected to hold for at least another decade (see "Nanotechnology for High-Performance Computing," PDF, Chau & Radosavljevic).

containing more components. But as components are added, decreased yields more than compensate for the increased complexity, tending to raise the cost per component. Thus there is a minimum cost at any given time in the evolution of the technology. At present, it is reached when 50 components are used per circuit. But the minimum is rising rapidly while the entire cost curve is falling. If we look ahead five years, a plot of costs suggests that the minimum cost per component might be expected in circuits with about 1,000 components per circuit [....] In 1970, the manufacturing cost per component can be expected to be only a tenth of the present cost.

[p.2a-b]

In the late 1990's I became curious about Moore's Law: why did it continue to apply? Part of the reason, of course, was constant cross-spillovers in production and design: semiconductors are produced by a complex industrial process, and improvements at any point in the process reduce the log of costs of the final product. This is quite different from, say, the production of aircraft where costs are additive; if General Electric were to suddenly cut the cost of the GE90-115B engine in half, the cost of the Boeing 777-300 that uses it would not decrease by 50%; it would decrease by about 12%. The GE90-115B itself has to be combatible with other aircraft engines

Also, chip production has a low marginal cost relative to fixed costs. Usually the classic example of such an industry is rail transport, but of course an additional 100 Km of railroad costs about as much as the previous 100 Km; whereas there is a restricted range of traffic possible over a given distance of track.

However, there is another important reason Moore's Law has been remarkably durable: expenditures on electronics have grown faster yet.

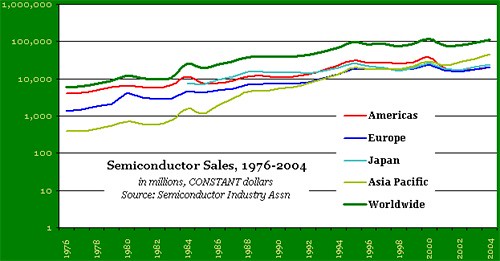

There's a concept in economics called "expenditure elasticity of demand," seldom used in the monographs I've read. It's the proportion by which expenditures on a thing decline as the price goes up. Usually students of economics are accustomed to unrealistic models in which the amount one spends on a good is unreasonsive to the price of that good. Certainly one expects demand to decline if it does, but in a realistic model, expenditures will decline as well. And indeed, if we look at the semiconductor industry, we see that sales have grown exponentially over the last 30 years; worldwide, they have roughtly doubled every ten years (see figure).

Moore's Law represents a virtuous cycle in technology: increasing demand drives increasing innovation, which drives improvement, which drives demand.

This will end, of course; the amount of capital investment required, the accumulating constraints of formats on semiconductor design, diminishing marginal revenue product in semiconductors, perhaps even the development of post-semiconductor technology-will put the brakes on this virtuous cycle. In the meantime, the locus of the semiconductor industry will continue to change nationality and specialization.

(To return to the referring post at HC, click here)

Labels: economics

0 Comments:

Post a Comment

<< Home